☆ Yσɠƚԋσʂ ☆

- 2.13K Posts

- 2.47K Comments

6·41 minutes ago

6·41 minutes agoThe only dishonesty here is in your own comment. We know what alternative systems to liberalism are, and there existing socialist states today. Pretending like nothing better is possible and nobody is offering any alternatives is the height of dishonesty.

10·1 hour ago

10·1 hour agoAnybody who starts talking about Russia to distract from what’s happening in their own liberal state descending into fascism is indeed a lib. It’s amazing how intellectually impoverished you people are that you think your transparent straw man is going to convince anyone. Nobody was talking about Russia here at all. It doesn’t matter what Russia is like, that’s not the standard you’re being held to. But of course liberal have no standards.

22·14 hours ago

22·14 hours agoThe number of parties has fuck all to do with how democratic a particular system is. It’s whose interests the parties represent that matters. In capitalist societies, parties serve the interests of the ruling capital owning class, and the working majority simply gets to pick which member of the exploiting class will rule over them and repress them.

Every form of automation is turned against the worker under capitalism. AI will be no different here, and it might accelerate the collapse of the whole system.

And agree that education needs to change significantly at this point. A lot of education focuses on rote memorization, but what’s really important now is the ability to integrate the available information, evaluate it, and make decisions. Basically, applying dialectical thinking to the world. Also very much agree that USSR education was far better and broader. Becoming an intellectual was basically seen as the way to move up in society. In the west it’s just about making money which creates a very narrow and selfish horizon for people.

Teaching kids to experiment using computers in school is actually a really good idea. Once they develop the mindset it’s applicable everywhere, and easily transfers to working with the physical world too.

I do think we’ll need to restructure society in significant ways in the near future because technology is outpacing our existing social norms. Unfortunately these kinds of upheavals tend to be highly volatile socially.

Putin actually addressed that in SPIEF, and he rejected any proposals. It could be Abramovich went there to give an ultimatum. It’s very clear to me that Russia isn’t going to compromise and isn’t looking to end the war right now. I think we might be approaching the end game. The US is fucked in Iran, the war just restarted again, and the US economy is on the brink because they’re running out of the reserve and won’t be able to stabilize gas prices for long now. Europe is collapsing as well, and nobody in the west cares about Ukraine at this point. It’s barely even mentioned in the news here now. It’s over.

18·1 day ago

18·1 day agohe’s a method actor

34·1 day ago

34·1 day agoMy favorite trope is how libs will inevitably start screeching about Russia when faced with the fact that their ideology is midwifing fascism.

16·1 day ago

16·1 day agoYou are the master of fractal wrongness.

2·1 day ago

2·1 day agoIt’s clearly what you think you did. Have a good rest of your day.

4·1 day ago

4·1 day agoNowhere have you given any explanation for why you believe OpenRouter wouldn’t be representative of broader trends. What the links show is that Qwen, Kimi, and other models are in fact being used by American companies. It should be obvious that using open models and APIs that cost a fraction of the cost would be attractive to companies, but clearly that’s too fantastical of an idea for you to take seriously. Also love how you latched on to me misremembering GLM instead of Kimi. Really highlights how you’ve really got no actual point to make here.

11·1 day ago

11·1 day agoA lot of that has little to do with model capability and comes down to coding harnesses not meeting the expectations of the model. Here’s a great discussion regarding that https://xcancel.com/MrAhmadAwais/status/2050956678502420612

DeepSeek team is aware of the tooling gap and now they’re working on their own harness to close it https://deepseekv4pro.com/news/deepseek-code-harness-team-claude-code-rival-report

4·1 day ago

4·1 day agoMy point was that there’s no reason to expect that the distribution is different outside OpenRouter. Also, companies absolutely do not prefer western models. https://www.youtube.com/watch?v=9baDOfwUzHQ

In fact, here’s an interview from Airbnb CEO plainly saying they prefer Qwen to ChatGPT:

Cursor’s Composer 2 is based of GLM https://news.ycombinator.com/item?id=47452404

and here’s an article from just a few days ago stating that US companies are increasingly using DeepSeek https://finance.yahoo.com/sectors/technology/articles/many-us-tech-firms-turning-202000738.html

There’s zero evidence for your assertion that Chinese models are overrepresented on OpenRouter. The reality is that these are the models everybody is using right now, and there’s a niche market for overpriced American models.

5·2 days ago

5·2 days agoI see little reason to expect that OpenRouter isn’t a representative sample. Your argument is based on the premise that people using OpenRouter behave substantively differently from other users so the distribution there wouldn’t be a good sample. But, what’s that argument based on? Why would users who pay for direct API access behave in a different fashion and prefer paying an order of magnitude more for models with comparable capability from American companies?

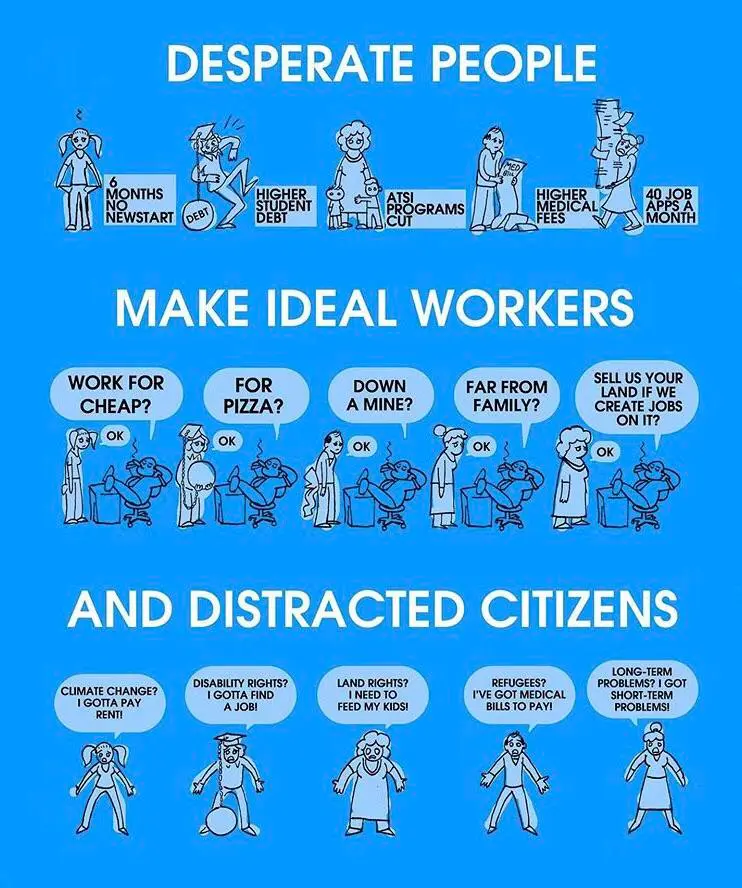

It’s pretty simple really. Liberals midwife a fascist system by justifying capitalist property relations while distracting people from class intersectionality with identity politics.

9·2 days ago

9·2 days agoThe issues people bring up with Signal are very easy for anybody with a minimally functioning brain to understand, and none of these experts are able to provide a credible answer to them.

The key issues people point out over and over is that Signal is a central server hosted in the US that harvests people’s phone numbers on sign up. The users are trusting server operators with their privacy at that point because there is no way to verify how this data is used. Since the server associates real identity with the account, it is in position to map out networks of people communicating. And if this data is shared with intelligence agencies, which they wouldn’t be allowed to disclose, then those can trivially correlate the personally identifiable information with all the other data they have access to.

If there’s a person of interest, and you map out whom that person wants to have private conversations with, that’s very useful data. Once you know that, then you can start tracking all the activities of their associates, and map out a whole network of people. Say, people organizing unions, or coordinating labor strikes, and so on.

This is an obvious problem with Signal, one that doesn’t take any significant expertise to understand, and one that has never been fully addressed. People talk about things like sealed sender, but that doesn’t address the problem I just outlined.

The core issue is that you have to trust the physical infrastructure rather than just the cryptography. The protocol design for sealed sender assumes the server behaves exactly as the published open source code dictates. A malicious operator can simply run modified server software that entirely ignores those privacy protections. Even if the cryptographic payload lacks a sender ID, the server still receives the raw network request and all the metadata attached to it. Your client has to talk to the server and identify itself before any messages are even sent.

When your device connects to send that sealed message, it inevitably reveals your IP address and connection timing to the server. The server also knows your IP address from when you initially registered your phone number or when you requested those temporary rate limiting tokens. By logging the raw incoming requests at the network level, a malicious server can easily correlate the IP address sending the sealed message with the IP address tied to the phone number.

Since the server must know the destination to route the message, it just links your incoming IP address to the recipient ID. Over time this builds a complete social graph of who is talking to whom. The cryptographic token merely proves you are allowed to send a message without explicitly stating who you are inside the payload. It does absolutely nothing to hide the metadata of the network connection itself from the machine receiving the data.

This once again makes it very suspicious that Signal insists on running a single centralized server.

32·3 days ago

32·3 days agoIt is absolutely irrelevant who makes the criticism, what needs to be addressed is the criticism itself. If somebody gives you advice to simply trust people blindly then you should be very suspicious of their motivations.

Liberalism is objectively a right wing ideology. Liberalism consists of two main parts. First is political liberalism which focuses on wholesome ideas such as individual freedoms and democracy. Second is economic liberalism which centers around free markets, private property, and wealth accumulation. These two aspects form a contradiction. Political liberalism purports to support everyone’s freedom, while economic liberalism enshrines private property rights as sacred in laws and constitutions, effectively removing them from political debate.

As a result, liberalism justifies the use of state violence to safeguard property rights, over supporting ordinary people, which directly contradicts its promises of fairness and equality. Private property is seen as a key part of individual freedom under liberalism, and this provides the foundational justification for the rich to keep their wealth while ignoring the needs of everyone else. Thus, the talk of freedom and democracy ends up being nothing more than a fig leaf to provide cover for justifying capitalist relations.

It’s always deflection with libs, these people are utterly incapable of honestly engaging with any criticism.