I co-teach AP Computer Science A through Microsoft’s TEALS program. The classroom runs on Chromebooks, Google Classroom, and code.org (AWS). Corporate infrastructure top to bottom. This year I added an AI tutor. That’s apparently the controversial part.

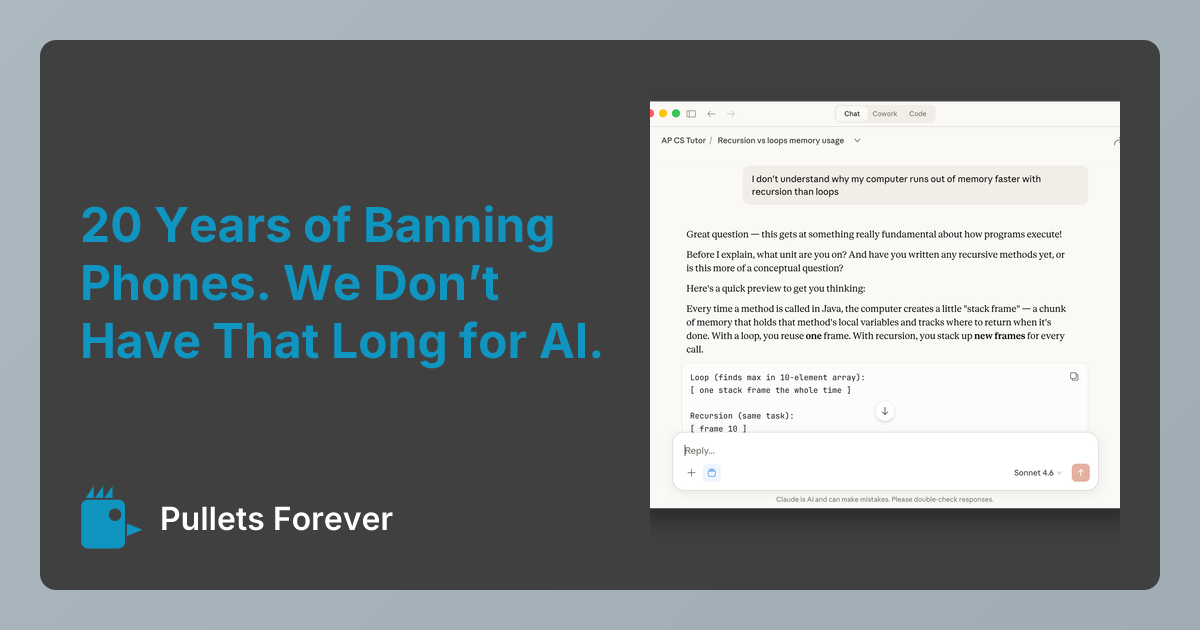

The research is interesting: a Wharton study found students using standard ChatGPT performed 17% worse on exams—the “crutch” effect. But students using AI with pedagogical guardrails showed no negative effect. The problem isn’t AI in education. It’s unguided AI. So I built a tutor that asks probing questions instead of giving answers. I’m sharing the prompt I use and how to set one up yourself.

While, China made AI education mandatory for six-year-olds this year. We’re still deciding whether to block ChatGPT.

Don’t the tools we have include internet and even (gasp) book literacy rather than going to a chatbot? At very best, evidence AI helps anyone is shaky. At worst, we are witnessing a reverse Flynn effect in education right now, and this alleged tool - besides not doing what was promised and can’t even make enough money to prop itself up - has been caught enticing children into suicide. If a billionaire genius like Sam Altman can’t code in a guardrail to save a child’s life, how can you?

Why encourage it?

Are the children being taught a tool, or are they being used as guinea pigs?