Post content:

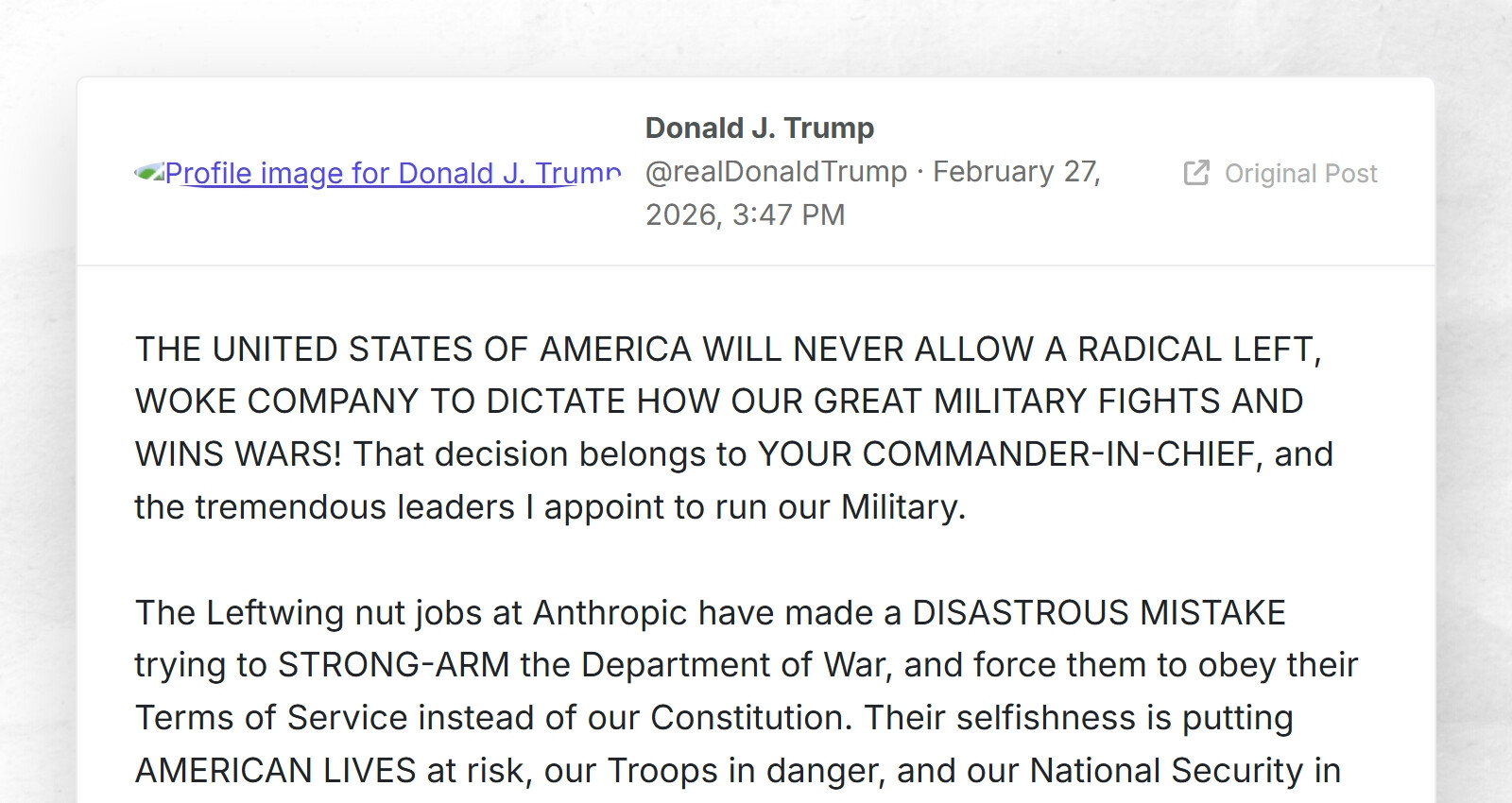

THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.

The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!

PRESIDENT DONALD J. TRUMP

Anthropic is self righteous and self serving.

Like, I like local LLMs more than most, and Anthropic models are great at the moment, but don’t mistake Anthropic for having a spine.

I mean… this is losing them a 200 million dollar contract. And to one of their competitors who will gladly acquiesce, so it’s hard to argue that this benefits them.

Good marketing to a bunch of left-wing people who hate AI, I guess, but that feels like Elon joining the Trump administration in hopes of selling Teslas to rednecks, it might work on a few, but I just can’t imagine this is 4D chess that will make them a fortune when they’re abandoning that much money immediately.

Their statement also came after the DOW threatened to put them on the list of companies that are totally banned from doing any business in America, usually reserved for Chinese companies that are deemed a national security threat, which would make it illegal for any company doing business in America to do any business with them, as they’d be added to the same list, which would have essentially killed Anthropic as a business entirely.

You don’t have to love Anthropic because they did a good thing, they were fine with anything less than automatic killing and mass surveillance, after all, but I don’t think it’s correct to say this was sneaky and spineless somehow.

Eh, the context I was thinking of is that they are constantly playing “safety theatre” where it absolutely doesn’t matter. They’ve tried to kill open models and basically capture regulators by misleading or outright lying, for their benefit.

In other words, this is a case of “a broken clock is right sometimes,” and I think they knew Trump will back down.

Fair, I definitely haven’t simped for them in the past just because they post some good articles on AI safety.

Although… I’ll say of them, they seem more like what OpenAI should be, actually trying to implement AI responsibly, and freely sharing that information. It’s good research, even if marketing is the motivation. Meanwhile OpenAI, the “charity” that’s supposed to guide us to a responsible AI future, moved their most addictive and mentally dangerous model to the highest paid tier instead of actually killing it until very recently.

Although at the end of the day, Anthropic is a for-profit company, in a better world they wouldn’t have released models publicly before this research was actually done and pressing dangers like AI psychosis were actually safeguarded against. Better late than never, sure, but the whole industry has done a lot of damage already, and the work of resolving the issues still isn’t even close to done.

I’m betting this ends with Amthropic somehow giving him money.

Sadly you’re almost certainly right, at least eventually.

You are asking for eternity from that which is temporary.